Lean Startup, day 9: Benchmarking Existing Solutions

In the previous posts, we explored the pain points of our customer segments, and the existing solutions they found.

Now it’s time to assess these existing solutions, in terms of features, price, etc. It’s time for us to benchmark alternative solutions.

What Is A Benchmarking?

Benchmarking literally means: to define a standard in order to make comparisons. By extension, doing a competitive benchmark is a comparative analysis of the competition. It covers the exercise to make the inventory and compare the competitors of a product, based on a set of performance metrics.

The objective is to identify best practices and performance gaps, which are opportunities for new products, or key differentiators. It’s a difficult exercise, because competitors often don’t share their performance metrics - or because you often don’t want to ask - so you need to assess them yourself.

For us, the benchmarking is also a way to test the market in terms of pricing. The best way to know how much we should charge for our product is to determine how much our competitors charge for similar products. This affects the potential revenue, which in turn determines the profitability of the project.

What If There Are No Competitors?

Startups often come with ideas that are so disruptive that they can’t find any existing competitor. That’s what happened to us at the beginning, too: a quick Google search on the terms “admin on REST” returned a few open-source libraries, but no commercial product.

But in fact, the absence of competitors should be considered as a very bad sign. If no other product occupies the landscape, the reason is often because:

- the problem isn’t worth solving, or

- solving the problem is too expensive for the customer segment

And we can’t overcome any of these limitations with great engineering or great marketing. So it’s better if there are, actually, competitors.

And usually there are, but you have to look at a broader range of products. For us, the key to discovering real competitors were the problem interviews: all the customers we interviewed and who shared the pain points had found a way to deal with them. It was often a very manual way, something not ideal (like Excel + import/export or phpMyAdmin), but the competition existed.

Admin-as-a-Service Competitors List

That leads us to look further. We not only consider products doing exactly what we want to offer (an admin builder on REST APIs), but also some that offer part of the functionality, or include it entirely as part of a larger feature set. A large part of the difficulty is to learn how customers and competitors name their service (like “backend-as-a-service”). Here is the list of product categories we decide to consider.

- Excel and other spreadsheet editors (Numbers, Libre Office, Google spreadsheets)

- PHPMyAdmin

- Admin generator frameworks (e.g. Django Admin, Sonata admin, Rails admin, etc.)

- Backend as a service, or database as a service tools, which may include an admin part (Parse, orchestrate.io, Kinvey, etc.)

- API gateway services (API Umbrella, Mashape Kong, etc.)

- Online Business Dashboard services (KissMetrics, Geckoboard, Klipfolio, dashing.io, BIME analytics, etc.)

- BI solutions (Tableau, QlikView, etc.), which are an extension of the previous category

After about a day of search, we easily find more than an hundred products. Now we’re talking! That means there is a market, and its is not negligible in size. But assessing the performance of all these products is a huge work, so we choose to limit ourselves to 10 services at most.

Some are very popular, some are not. We quickly eliminate the ones for which we can find no customer feedback, or support forums (which are a good sign of activity). We don’t eliminate the ones that we discovered during the problem interviews, because those are the services our customers will compare us to. We eliminate the services that do too much (and that are extremely expensive) like full-blown BI solutions, and anything from Oracle, Microsoft or IBM.

Here is the list we finally settled on:

- Excel

- phpMyAdmin

- Django Admin

- ng-admin

- KissMetrics

- GeckoBoard

- Parse

Note: Had we had a narrowed customer segment, we also could have restricted the list to services that target this segment. Retrospectively, the lack of proper customer segmentation beforehand led us to a list where not all products were relevant.

Performance Metrics To Compare

It’s hard to determine the performance metrics to use at first. There are the obvious ones (like pricing), but most metrics appear when you study one particular product with a strong differentiation.

It’s also hard to determine metrics that are objective, and that prospects in our customer segment can replicate. For instance, notions of design are very subjective.

It’s hard to determine metrics that you can use in an aggregated, one-dimension scale - sort of like the “good to bad” scale that customers quickly build in their minds.

Finally, most products don’t compare easily with competitor, and that’s on purpose. If you’ve ever compared for instance mobile subscriptions, you know that TelCos do all they can to confuse the consumer and push them to choose not objectively, but in terms of Brand. In our case, it’s for instance the pricing model: some services are priced per user per year, some are per number of requests, some are per number of API connectors, etc.

In conclusion, be prepared to revise your metrics often, and don’t try to be too scientific.

We settled on the following metrics:

- Pricing

- Feature set

- UI modernity

- Quality / versatility of REST connectors

- Ability to edit the data

For each of these, we gave three values according to the judgement our ideal customer (Peter) would give: “bad”, “average”, and “good”. That’s not very scientific, but you won’t be able to do much more precise ratings.

You don’t need too many metrics, because then you will have troubles determining groups and patterns. For instance, with two dimensions, you can easily place all the products on a scatter plot, and draw circles around the clusters that appear on the paper. With five dimensions, it’s harder - unless you remember how to compute planar projections.

What We Learn From This Exercise

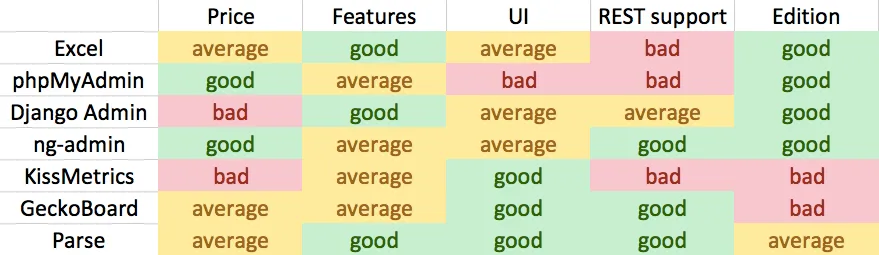

Here is the chart that sums up our findings:

The biggest difference between admin-as-a-service and solutions like online dashboards and BI tools, even if they are accessing data thanks to APIs: they are all read-only where we propose to edit those data sources via our solution.

When studying Online Dashboards solutions, we can see that the average basket is around 20$ per month per user. That’s a price that customers are willing to pay just to access their data in a visual way. Perhaps they can pay as much, or even more, to get the ability to edit this data? BI solutions are much more expensive, especially their graphing features.

The UI quality seems a great differenciator at first, except that we also see many products that are both ugly and successful. So while it seems to be a prerequisite to have a flashy UI to get new customers, it’s really the feature set that seems to make the difference.

Backend-as-a-Service solutions target IT customers, while we chose to target marketing customers at that stage.

Also, REST support is expanding, but not broadly implemented (partly because REST isn’t a standard). The good news is that because online dashboards and BI solutions succeed in accessing data from APIs, we can do it too!

All in all, this exercise does not prove that there is a potential market for the solution we imagine. But it doesn’t prove the contrary either ;)

Next Steps

Now that we have a clearer view of the competition, we arrive at the end of what we can learn only by studying the market. To get more learning, it’s time to switch gear, and start building something. That’s what we’ll explore in the next post in this series.

Credits:

Thumbnail picture: Curves, by Dragan

Authors

Marmelab founder and CEO, passionate about web technologies, agile, sustainability, leadership, and open-source. Lead developer of react-admin, founder of GreenFrame.io, and regular speaker at tech conferences.