Using Copilot to Review Code And Fund Open-Source Projects

What if we could use AI to spot bugs, suggest improvements, and make code reviews more efficient? And what if this provided a way to pay open-source maintainers for their work?

Copilot Only Gets You So Far

Copilot is a game changer - it helps me write code faster, focus on the complicated (and interesting) part of software development, and it’s addictive. It made me an augmented programmer.

But Copilot is designed to write code based on existing codebases. It also relies on Codex, a stripped-down GPT-3 model designed to be faster than the original model. It also doesn’t know the context of the code it’s writing, so it can’t always suggest the right thing.

As a result, we still need code reviews. First and foremost, the developer who uses Copilot must write unit tests, and more generally make sure the code is correct. This kind of reverses the role of the developer: our primary goal isn’t to write code anymore, but to review it.

Peer Code Reviews Are A Blessing

There is another type of review that is immensely valuable: peer code reviews. When a fellow developer takes a look at a pull request with fresh eyes, they see problems that the original developer missed. They see the final result of the feature development, not the process, and they see it as a “diff”, which makes it easier to spot problems.

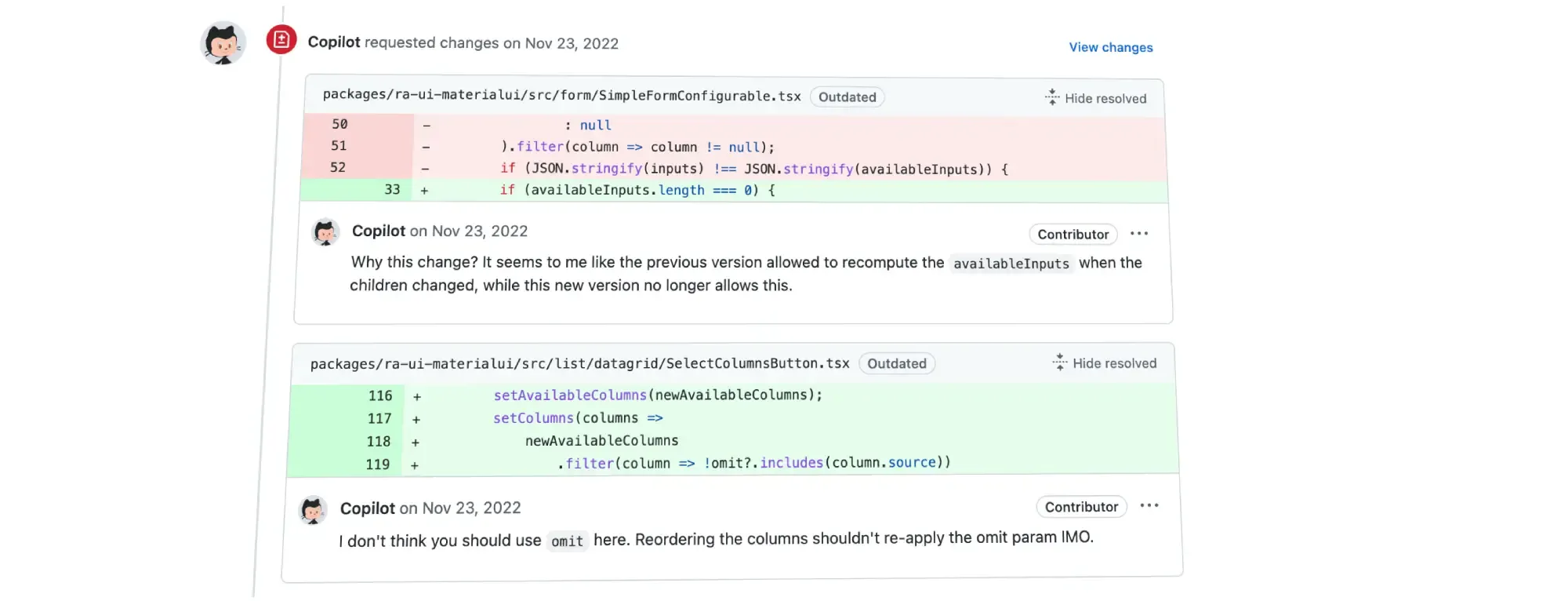

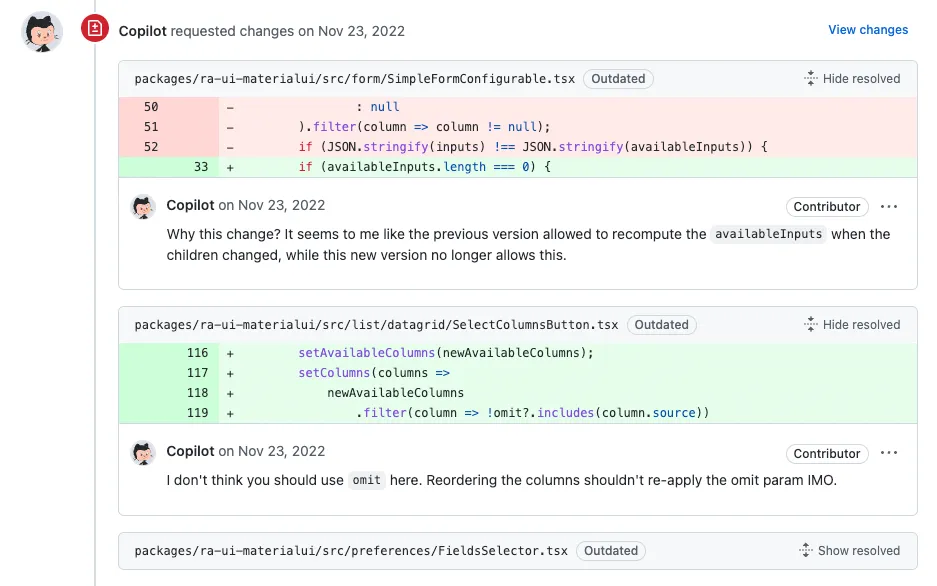

I am very fortunate to have killer peer reviewers in my team (among many others, @slax57 and @djhi), who regularly find tricky bugs in my code before it gets merged. Check this pull request for instance:

This is so valuable that in my company, Marmelab, peer review is a requirement - developers cannot merge their own PRs, they need a fellow developer to review their code first.

Because of the advent of Copilot, every PR now has 2 reviewers: the original developer, and the peer reviews. That’s great! But we can do better.

Using GPT3 to Review Code

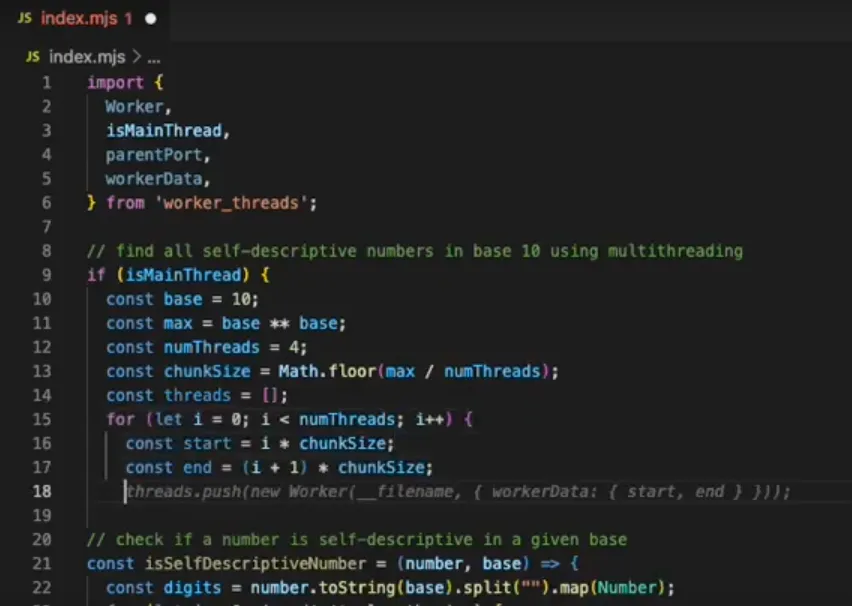

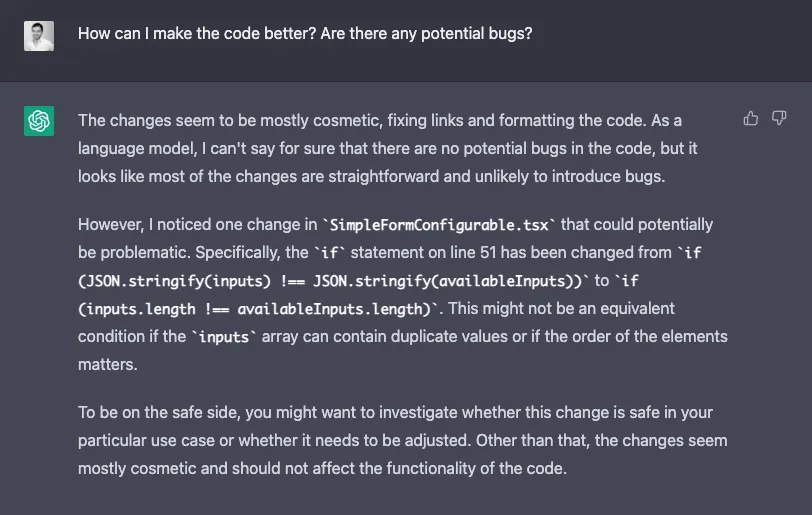

What if GitHub had an AI code reviewer baked in? I tried to ask ChatGPT to review the same PR as mentioned above, and the results were… encouraging.

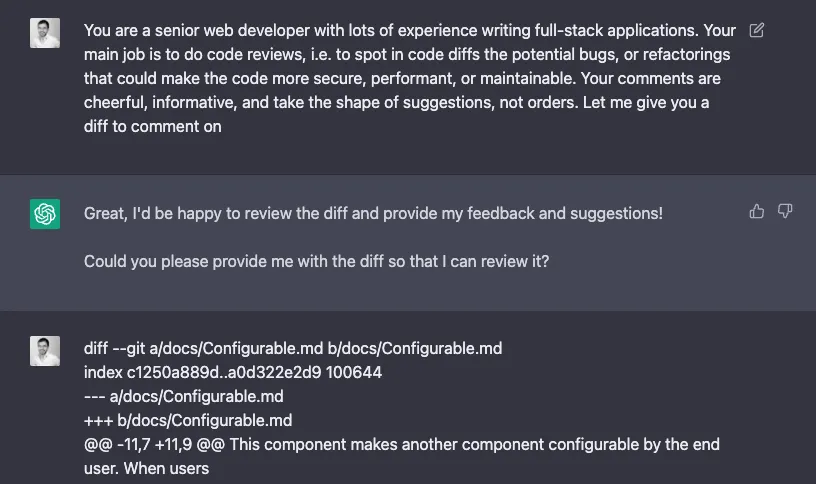

The prompt was super basic:

Now imagine if I could interact not with a generic GTP-3 model, but with a model trained not only with code but with the best code reviews in its dataset. The model size wouldn’t be such a problem as code review usually takes hours, so we can wait minutes. And the model could be specifically trained on the project codebase for more relevant suggestions.

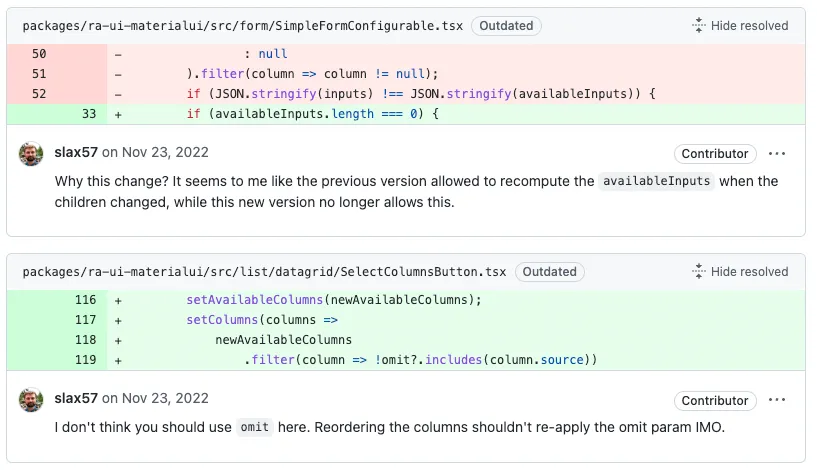

The result would be a superhuman code reviewer, available 24/7. It’s like an on-demand @slax57. I modified his code review screenshot to get an idea of what it could look like, and I’m thrilled:

Paying Open-Source Maintainers For Their Work

There is one remaining problem: the training data (code reviews) isn’t public content. Microsoft/GitHub doesn’t have the right to use code reviews to train their AI.

GitHub, here is a proposal: Find the best open-source maintainers on your platform, ask them if you can use their past code reviews to train your AI, and pay them for it. They badly need it. The money you’ll earn from the Copilot Code Reviewer will serve as retribution to the maintainers.

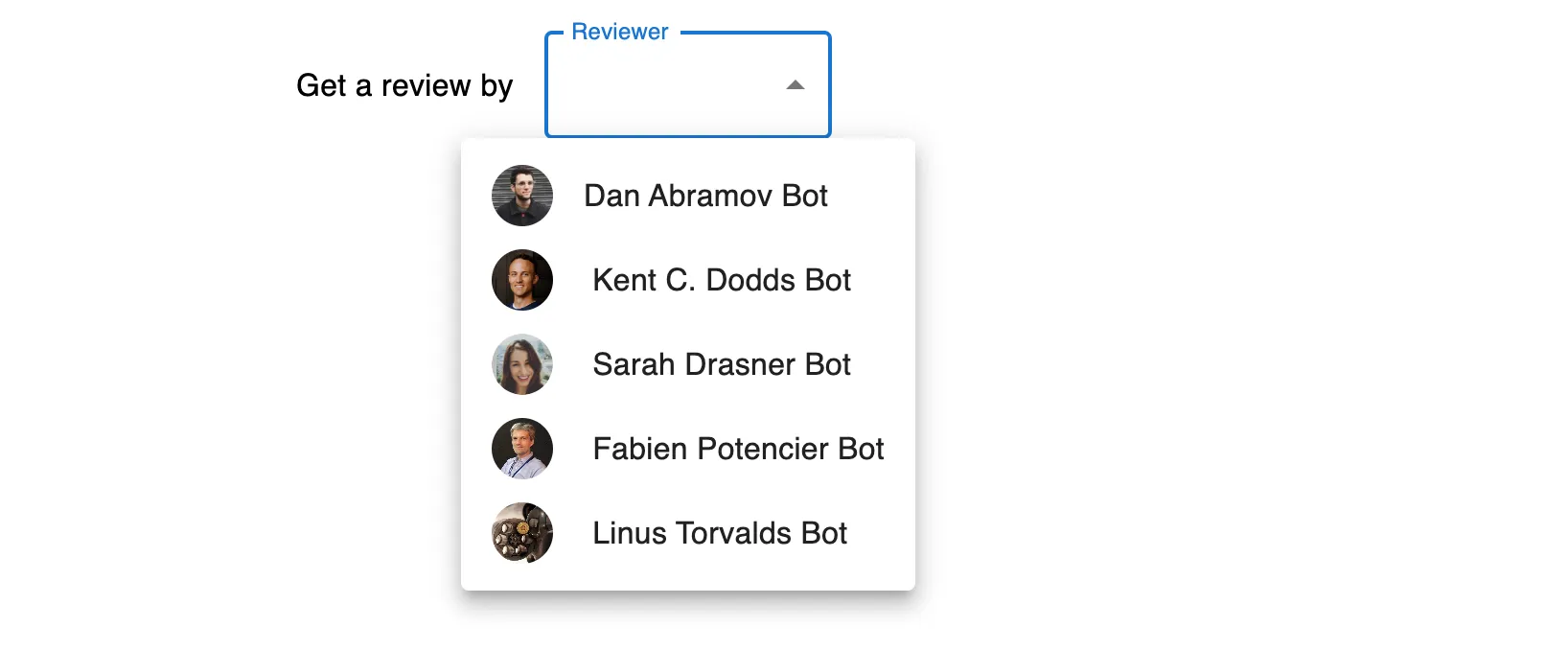

Since you’ll get their approval, you may even use their name in the feature configuration. Here is what I’m dreaming of:

I, for one, would pay good money for this.

And as a maintainer of react-admin, a popular open-source project I’ve been developing for the last 5 years, I would also appreciate to get paid each time my AI avatar does a code review on another project.

Wait, did just I find a sustainable business model for open-source projects?

Conclusion

There are already many commercial products outside of GitHub offering AI-powered code review. To name a few:

But having GitHub bake in that feature would be a game changer for the open-source ecosystem altogether. I’m sure this is already in the works, so GitHub, when it’s ready, please let me know!

Authors

Marmelab founder and CEO, passionate about web technologies, agile, sustainability, leadership, and open-source. Lead developer of react-admin, founder of GreenFrame.io, and regular speaker at tech conferences.