How-to dump your Docker-ized database on Amazon S3?

We sometimes use Docker for our staging environments. Putting code in staging or production also means required data backups. Here is a post describing how we regularly upload database dumps directly from a Docker container to Amazon S3 servers.

Dumping Docker-ized Database on Host

The first step is to create a dump of the database. Let’s consider we have an

awesomeproject_pgsql container:

#!/bin/bash

# ConfigurationCONTAINER_NAME="awesomeproject_pgsql"FILENAME="awesomeproject-`date +%Y-%m-%d-%H:%M:%S`.sql"DUMPS_FOLDER = "/home/awesomeproject/dumps/"

# Backup from docker containerdocker exec $CONTAINER_NAME sh -c "PGPASSWORD=\"\$POSTGRES_PASSWORD\" pg_dump --username=\$POSTGRES_USER \$POSTGRES_USER > /tmp/$FILENAME"docker cp $CONTAINER_NAME:/tmp/$FILENAME $DUMPS_FOLDERdocker exec $CONTAINER_NAME sh -c "rm /tmp/awesomeproject-*.sql"After setting a few configuration variables to ease re-use of this script, we

execute via the docker exec command a dump of our PSQL database. For a MySQL

one, the process would be quite the same–just replace pg_dump by mysqldump. We

put the dump into the container /tmp folder.

Note the sh -c command. This way, we can pass a whole command (including file

redirections) as a string, without worrying about conflicts between host and

container paths.

Database credentials are passed via environment variables. Note the \ inside

the Docker shell command: it is important as we want these variables to be

interpreted in the Docker container, not when the host interprets the command. In

this example, we’re using the official PostGreSQL

image from Docker Hub, setting these variables at container creation.

We then copy the file from the container to the host using the docker cp

command, and clean the container temporary folder.

Keeping Only Last Backups

We generally keep data backups for a week. We then have 7 days to detect some data anomalies and to restore a dump. Some developers prefers to keep another backup per month, but except in very sensitive environments, month-old data is too old to be useful. So, let’s focus on the last 7 days:

# Keep only 7 most recent backupscd $DUMPS_FOLDER && (ls -t | head -n 7 ; ls -t) | uniq -u | xargs --no-run-if-empty rmcd $DUMPS_FOLDER && bzip2 --best $FILENAMEThe first command probably looks like voodoo. Let’s explain it. First, we

go into the dumps folder. Note that we need to repeat it on all following lines.

Indeed, commands are executed each in their own sub-process. Thus, the cd only

affects the current command, on a single line.

The (ls -t | head -n 7 ; ls -t) command is a parallel command. We actually

execute two different commands at the same time, to send their result on the

standard output. We list (ls) our files by modification time (-t), newer

first. We then keep the 7 first lines (head -n 7).

In addition, we also display all available files. So, all files we should keep are displayed twice.

Finally, we keep only the unique lines, using uniq -u, and rm each result.

The --no-run-if-empty option is just to prevent an error when no file needs to be

deleted.

As we pay Amazon depending of the amount of data stored on S3, we also

compress at the maximum our dumps, using bzip2 --best. Even if prices are

really cheap, it is always worthy to use a single command to save money, isn’t it?

Uploading Database Dumps on Amazon S3

Storing dumps on the same machine than databases is not a good idea. Hard disks

may crash, users may block themselves from connecting because of too restrictive

iptables rules (true story), etc. So, let’s use a cheap and easy-to-use

solution: moving our dumps to Amazon S3.

For those unfamiliar with Amazon services, S3 should have been called “Amazon Unlimited FTP Servers”, according to the AWS in Plain English post. It is used to:

Store images and other assets for websites. Keep backups and share files between services. Host static websites. Also, many of the other AWS services write and read from S3.

Of course, you can restrict access to your files, as we are going to configure it.

Creating a Bucket

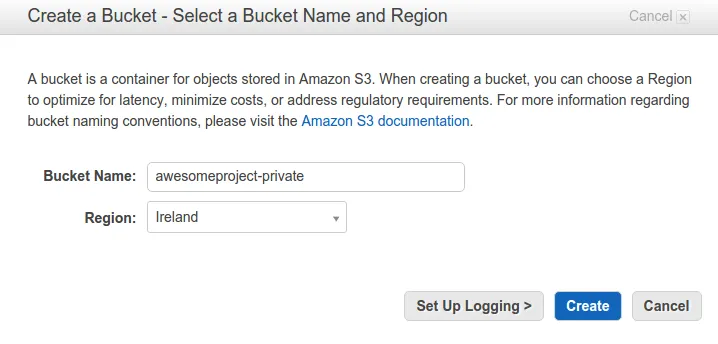

First step is to create a S3 bucket. You can replace bucket by hosting for a

better understanding. So, log on your Amazon Web Services (AWS) console,

and select S3. Then, Create bucket give it a name and choose a location for it.

Keep defaut values for all others parameters. We are now going to restrict access to our bucket, by creating a specific user.

Restricting Access to the Bucket

This is the only painful step: creating a user with correct permissions to

access our bucket. Indeed, our server script needs to connect to AWS. We can of

course give it our own root AWS credentials. But, as it is safer to use a

low-privileges account instead of root on Linux, we are going to create an

awesomeproject user.

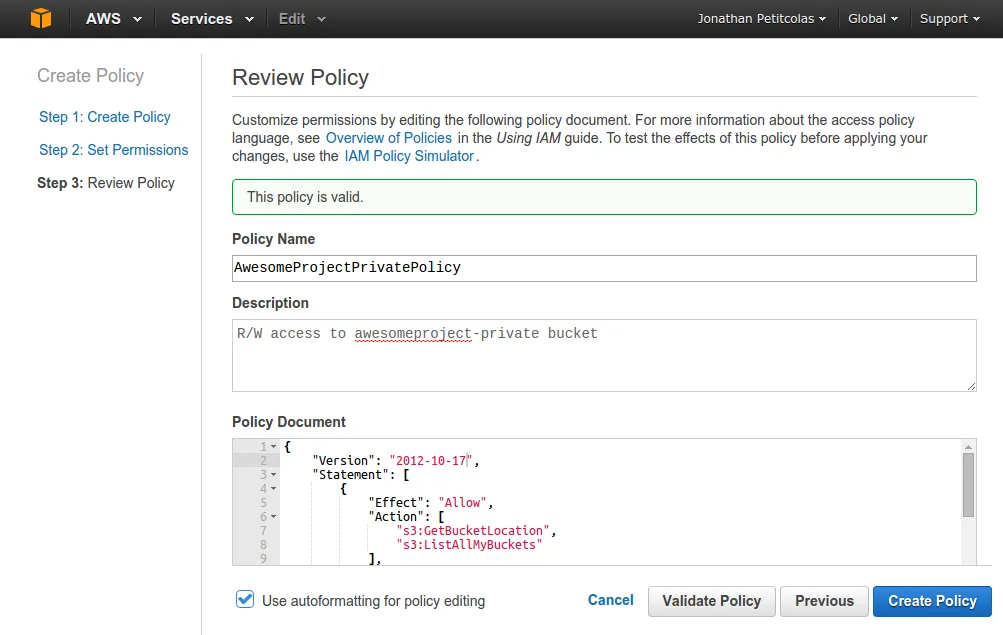

So, go on the IAM service (Identity and Access Management). On the left menu, choose Policies and create a new

one from scratch. Give it a name, a description, and add the following content:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": ["s3:GetBucketLocation", "s3:ListAllMyBuckets"], "Resource": "arn:aws:s3:::*" }, { "Effect": "Allow", "Action": ["s3:ListBucket"], "Resource": ["arn:aws:s3:::awesomeproject-private"] }, { "Effect": "Allow", "Action": ["s3:PutObject", "s3:GetObject", "s3:DeleteObject"], "Resource": ["arn:aws:s3:::awesomeproject-private/*"] } ]}This policy allows three operations, respectively:

- List all buckets available to user (required to connect on S3),

- List bucket content on the specified resource,

- Write, read and delete permissions on bucket content.

Validate the policy and create a new user (in the Users menu). Do not forget to

save their credentials, as we will never be able to retrieve our secret access key

anymore.

Finally, select the fresh new user, and attach it to your AwesomeProjectPrivate

policy. This cumbersome configuration is now completed. Let’s write our last

lines of Bash.

Uploading our Dumps to Amazon S3

To upload our files, we use the AWS-CLI tools. Installing them is as simple as:

sudo pip install awscliAfter configuring user credentials through the aws configure command, we can

complete our script with the following lines:

# ConfigurationBUCKET_NAME="awesomeproject-private"

# [...]

# Upload on S3/usr/bin/aws s3 sync --delete $DUMPS_FOLDER s3://$BUCKET_NAMEWe synchronize our dumps folder (removing obsolete files) to our bucket. Do not forget to use a full path to the AWS binary. Otherwise, you may get some errors when launched from a Cron job.

Final Script

For the record, here is the full script:

#!/bin/bash

# ConfigurationCONTAINER_NAME="awesomeproject_pgsql"FILENAME="awesomeproject-`date +%Y-%m-%d-%H:%M:%S`.sql"DUMPS_FOLDER = "/home/awesomeproject/dumps/"BUCKET_NAME="awesomeproject-private"

# Backup from docker containerdocker exec $CONTAINER_NAME sh -c "PGPASSWORD=\"\$POSTGRES_PASSWORD\" pg_dump --username=\$POSTGRES_USER \$POSTGRES_USER > /tmp/$FILENAME"docker cp $CONTAINER_NAME:/tmp/$FILENAME $DUMPS_FOLDERdocker exec $CONTAINER_NAME sh -c "rm /tmp/awesomeproject-*.sql"

# Keep only 7 most recent backupscd $DUMPS_FOLDER && (ls -t | head -n 7 ; ls -t) | uniq -u | xargs --no-run-if-empty rmcd $DUMPS_FOLDER && bzip2 --best $FILENAME

# Upload on S3/usr/bin/aws s3 sync --delete $DUMPS_FOLDER s3://$BUCKET_NAMECron Task

Finally, let’s cron our script to automatically save our data each day. Add the

following file into /etc/cron.d/awesomeproject:

# Daily DB backup00 23 * * * awesomeproject /home/ubuntu/awesomeproject/bin/db-save.sh >> /home/ubuntu/cron.out.log 2>&1It launches the script every day at 11pm as awesomeproject user. Prefer absolute paths

to relative one. It will save you some serious headaches.

Another useful tip when you deal with crons is to redirect their output to a file.

It is really essential for debugging. Here, we redirect standard output to the

cron.out.log file (via the >> operator), and redirect the error output to the

standard output, thanks to 2>&1.

We can now enjoy the security of daily database dumps. Great!

Authors

Full-stack web developer at marmelab - Node.js, React, Angular, Symfony, Go, Arduino, Docker. Can fit a huge number of puns into a single sentence.