Lean Startup, day 14: Customer Acquisition

In the previous post in this series, François explained how we built a landing page with a clear value proposal for Misocell, the Lean Startup experiment we’re running. With this landing page, we want to test our ability to acquire customers based on a product promise. Now it’s time for the real customer acquisition test. Can we get people to sign up for our product?

Customer Acquisition Channels

The Lean Startup movement didn’t invent anything regarding customer acquisition, especially concerning digital products. Acquisition channels for new prospects are well known, and there is only a handful of them:

- Mouth to ear, which is the magic source everyone is looking for. But let’s face it, it doesn’t happen often, and is impossible to manipulate

- Natural traffic, coming from search engines like Google, and based on a match between customer searches and a product’s description

- Social traffic, coming from social networks like Twitter, Facebook, or LinkedIn. It requires an existing network of already large size to be efficient (e.g. a Twitter account with dozens of thousands of followers).

- Paid traffic, coming from ads displayed on search or social networks, bought on a per click basis. The price of a single click depends greatly on the search term, the customer’s profile, and the competition.

- Display campaign, which are also ads but paid per view, not per click. This channel is useful to increase brand awareness, but absolutely not in the early stages of a product, where you want cutomers, not a brand.

- Emailing, which requires the acquisition of an email database, and a very good targeting of recipients to avoid finishing in spam. Sometimes useful for B2C products, much less for B2B products, where an email address becomes obsolete in a few years because people change position or company often.

- Cold calling and other sales techniques, which require the acquisition of a phone number database, and an army of qualified sales with a pitch that doesn’t require visuals. This also includes buying a booth at a conference of a trade show. Very expensive, and hard to scale.

Each of these techniques has a different cost ranging from a few cents (for natural traffic) to hundreds of dollars per customer (for cold calling). Yes, even natural traffic requires time and money to optimize your site for search engines (Search Engine Optimization, or SEO, isn’t free). You can’t consider the most expensive channels unless the Average Revenue Per User (ARPU) of your product is worth several times the cost of acquisition. So for instance, if cold calling produces customers at a cost of 250€ per customer, it should only be considered if the ARPU is over 1,000€ per customer.

People working in Digital Marketing are currently inventing new techniques to acquire customers in an innovative way. For instance, organizing a Hackaton to build new products on top of a company’s API, effectively delegating customer acquisition to unpaid developers. These techniques, summarized under the term Growth Hacking, are not free, and often less effective than the ones listed above. They can be used as a complement, but not as a replacement for the usual acquisition channels.

Acquisition and Activation

When any of the above channels works, a visitor comes to the site and looks at the product description. Getting visits is called acquisition. On average, visitors stay less than 8 seconds on a website. Some visitors may decide to go further based on what they see during that time - that means they’re well targeted. These better qualified visitors are called prospects. But until they pay for the product, they are still only prospects and not yet customers. The process of turning a prospect into a customer is called activation. To activate a prospect, you must get them through a subscription process and receive money from them.

How do you test activation? You need a real product, one that a prospect can actually buy, and a subscription process. At this stage of the Misocell experiment, we have neither of the two. So we can only try to measure our Acquisition rate, not our Activation rate. We decide to measure our ability to turn a visitor into a prospect by counting the number of signups to the “subscribe to the beta” form.

For a deeper look at acquisition and activation, and other relevant metrics for startups, I recommend reading the excellent Startup Metrics For Pirates slide deck. It’s full of useful insights for product marketing and product management.

Misocell Customer Acquisition Strategy

At this stage, we’re not even considering the most expensive channels like cold call - our expected ARPU is too low. We’re not considering social or natural channels either, because they rely on an established product. Besides, we don’t have a common search term that people could type when looking for something similar to misocell. If you remember our Business Model Canvas, we decided to focus on paid advertising as our first customer acquisition channel.

But which network should we target: Google, LinkedIn, Twitter, or Facebook? How to target our leads, based on demographics and interests? How should we design the wording of the ads for maximum conversion? And how much should we expect to pay for each visitor?

Unless we actually do the exercise of buying visitors, there is no way to answer these questions. That’s why we’re doing a Lean Startup experiment: we know we have to get our hands dirty to get factual responses. So let’s do the experiment of buying traffic.

Google Ads

Setting up a Google Ads campaign isn’t an easy task. If you do it for the first time, you’ll do it wrong several times, and probably loose money in the process. For instance, we quickly spend 50€ worth of traffic from Google, resulting mostly in mobile clicks. Unfortunately, our website is not optimized for these devices.

After a couple days of trial and error, I decide get in touch with a business relationship of mine, who is a proficient Google Ads user. In ten minutes, he shows us the right path, which includes rewriting all our campaigns, and choose different keyword sets.

His first advice is to setup two ads groups: one for the US, and one for Europe. Because we are targeting business people, we can reduce the availability of our ads to weekdays and work hours. And because our website is optimized for people on desktop, we can un-select mobile devices. These two setups are necessary to avoid to pay for ad clicks from the wrong device, or for the wrong purpose.

The second advice is to create display campaigns with the Adwords tool for automatic images, and search campaigns with a wide request first. Later on, narrow down the request once we find out which keywords are working better.

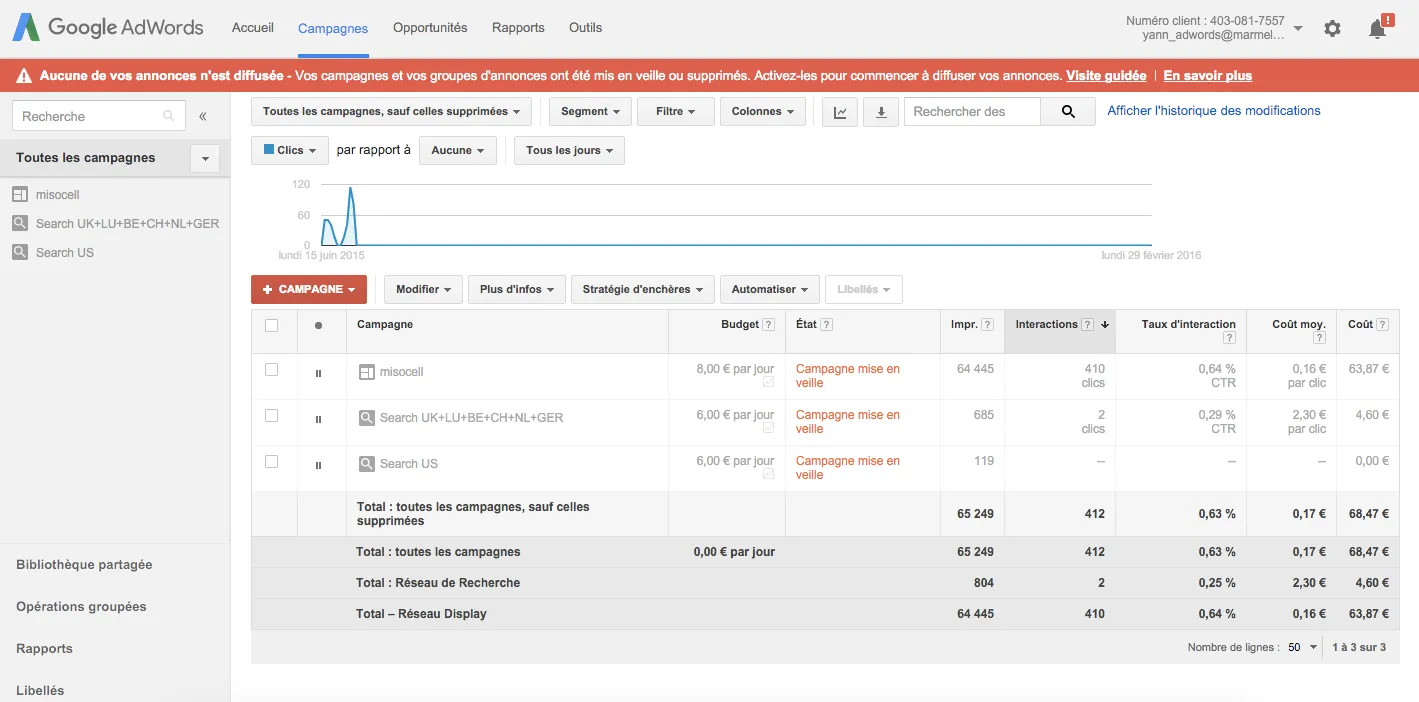

After these adjustments, here is the summary of our costs:

- On the display campaign, each lead costs us an average of 0,16€ (for a total of 410 clicks).

- On the search campaigns, each lead costs us an average of 2,30€ (for a miserable total of only 2 clicks).

After 4 days of running this new campaign, we have several dozen paid visitors to the landing page, but zero click on the “subscribe to the beta” call-to-action. The charts below show a large share of direct traffic (our own tests), and 165 paid sessions over 5 days (we turn the ads off on the weekend).

What’s wrong with our campaign? Is it the wording of the ads, the ad targeting, or the keywords? Perhaps visitors don’t understand the value proposal on the landing page. Less than 10% of paid visitors launch the teaser video, and probably don’t watch it until the end, considering the average time on site for paid traffic.

Most of the traffic comes from the Google display network, not from the Google search network. We understand that this network produces low quality visitors.

LinkedIn Ads

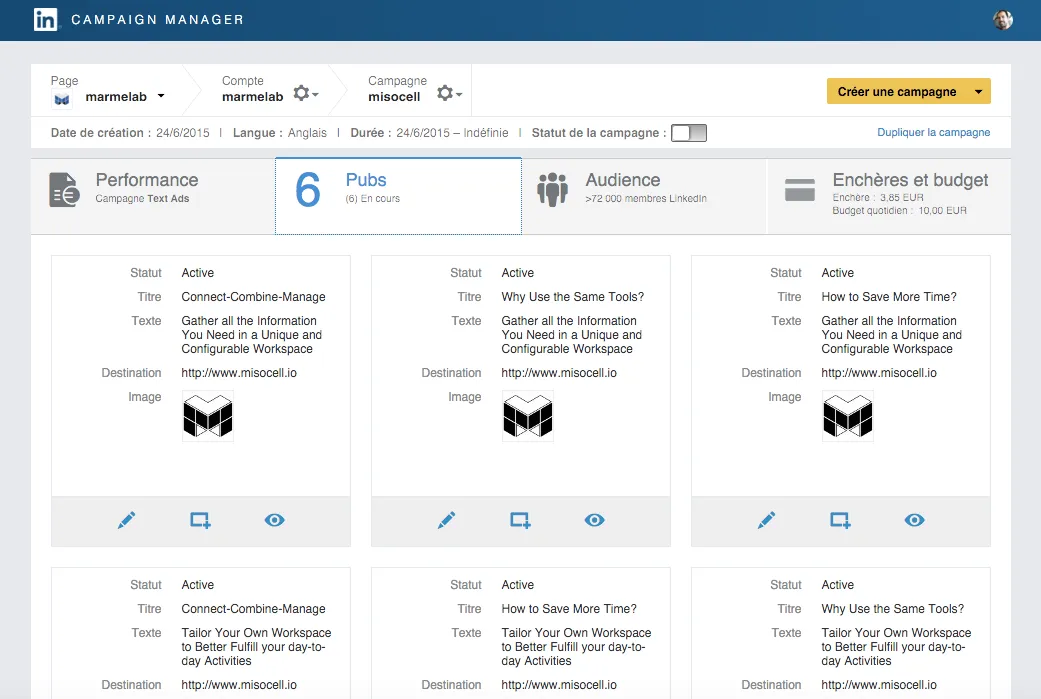

In parallel, we spent about two days setting up a LinkedIn Ad Campaign. If the Google Ads experience was tedious, the LinkedIn Ads manager is truly an instrument of torture.

Once launched, no matter how we tweak the LinkedIn campaign, it never brings any lead to our landing page. No a single one.

Paid Traffic Brings Zero Conversion

Had we mastered both Ad manager interfaces, some improvement was possible. But at this stage we are a bit desperate: multiplying a conversion rate of zero by anything won’t bring us much beta users.

We decide to try another acquisition channel: non-ad publications on social networks. That could help us to determine if the problem is with the landing page, or the ad campaign.

Social Network Traffic Brings A Lot Of Conversions

Jonathan and I tweet about misocell.io on our own Twitter accounts. Pretty soon, the percentage of users watching the video triples, and the satisfactory “ding” of a beta registration email starts ringing regularly.

These leads are better qualified, cost nothing, and convert efficiently.

But there is a big problem: all the people who register through the social channel are developers - we know them from our social networks. In a way, that’s normal: our own social network is mostly made of developers. But that’s absolutely not the customer segment we are trying to address.

So our landing page, targeted at marketing or operations teams, doesn’t manage to convince marketing and operations visitors to sign up, but it manages to convince developers…

There Are A Million More Reasons We Could Be Wrong

But that isn’t the product we had in mind in the first place. Our entire Lean Canvas is built on a customer segment excluding technical teams. Most of our Problem Interviews were with non-technical people, and they had somehow validated that the problem existed, and that no other satisfactory solution could be found.

There were many more reasons explaining why our experiment had failed:

- wrong ad network

- wrong ad settings

- bad ad

- bad ad / value proposal match

At least, using our social network helped us remove possible failure causes:

- bad value proposal

- bad demo video

- bug in the tracking code

Besides, it’s hard to draw conclusions from this limited experiment. There is a difference between correlation and causality. Even though we detect a (lack of) correlation between a keyword and a behavior, we have no proof that the keyword causes the behavior.

So we decide to continue progressing towards our initial goal: discovering an efficient customer acquisition channel through paid traffic. But as we’ve seen with problem interviews, we are on the wrong path.

[

We Are Targeting The Wrong Segment

Then it strikes us: if only developers sign up, maybe we’re on the wrong path. Maybe we should design a product for this population. After all, developers are more willing to use SaaS products, they know what an admin is, and they usually have to develop something like misocell in their projects. So they know how much it costs.

To be honest, the perspective of talking to CTOs or developers was extremely appealing. We are a web development company, we know the developer population, networks and vocabulary. We will have no difficulty gathering a large number of beta testers from the developer community at marginal cost. François could already imagine the new value proposal:

Don’t spend developer time to build an admin. Let your collaborators use misocell to customize their workspace and complete tasks in no time.

Is it already time to pivot? The temptation to come back to our comfort zone is extremely strong.

[

Interviews Would Help

In retrospect, there is one big mistake we made at that stage: we didn’t meet people in person to talk about our solution. We were expecting an online validation of our demo, but the lack of such validation left too many assumptions open. Had we presented the demo to the same customers we had met for the problem interview, we’d probably nail it sooner.

Ash Maurya encourages to double the problem interview by a solution interview, where you demo the solution face-to-face with a prospect, and ask if the solution fits the need. To be completely factual, the conclusion of such interviews should be based on whether the prospect becomes a customer or not. Because if we’re not able to convert a prospect to a customer with a hand-made demo and a 20 minutes talk, there is no chance we can convince other prospects with a landing page (on which the average time spent is 8 seconds).

Our mistake comes from the confusion between the demo and the MVP. The demo is just a tool to help during live interviews, while a MVP serves to prove the entire online scenario.

Next Steps

Problem interviews and customer acquisition experiments all lead us to conclude that we need to revise our Business Model, mostly targeting a new customer segment. That’s what we’ll describe in the next post in this series.

Credits:

Thumbnail picture: labyrinthe, by r0m@in

Authors

Agile coach and scrummaster at marmelab. Passionate about Lean Startup, Scrum, Kanban, and that sort of things. Co-founder of play14, the international Serious Game unconference.