Amnesty International: Taking advantage of the Lean Startup methodology to prove ourselves wrong

In the previous blog post about the product we built for Amnesty International, we focused on the problem, then on finding a solution.

Now, what do we start with?

We managed to have a shared vision of what our product should do. And that vision is based on a few assumptions. By no means are we going to build it all at once and see if magic happens. We need to test if our assumptions are right, at the lowest possible cost, then learn, improve, and iterate: the Lean Startup Way.

Initial Engagement

After reassessing our assumptions, the riskiest one seemed to be about engagement:

We think that people will be engaged enough to send an email, in their name, to the authorities responsible for human rights violations

We had many ways to test this assumption:

- Test on the prototype (in InVision)

- Build a survey, using a tool like TypeForm

- Build the most simple app to send an email

We chose the latter because it didn’t need that much investment, and the initial survey was rather positive. We also knew we had to take particular care about the way we address people: it should be both concise, and engaging.

The most simple scenario we could use was:

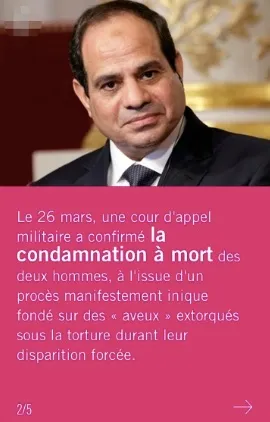

- The user receives an SMS from a third-party service

- They see a few Urgent Action description pages

- They see a call-to-action to send an email

- They can review and send the email

- They see an outboarding page

We built a first (and not very pretty) version in 2 weeks, then tested it.

We did a quantitative test sending SMS to about 2,000 amnesty contacts that had once been enrolled but had not been very active (and who did agree to receive SMS): that’s the closest we could get to our target.

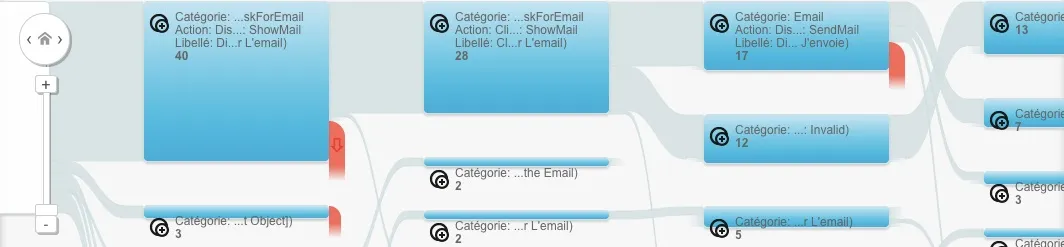

To be able to know how many people we lost at each step of the test scenario, we tracked every user action using an analytics solution.

The results were awful: over 2,000 SMS sent, only about 100 people visited our app. But once in the app, we didn’t lose so many people, about a quarter on the first page, and another quarter when asked to send an email.

That’s a 50% transformation rate from home page to the main action: people are engaged.

Why did we lose so many people from SMS to app? Could be bad targeting, awkward SMS wording, sending moment, topic choice… We decided we might handle that later. The test was proven enough for now.

Will To Send a Letter

Our second assumption was about sending letters since they seem to have more impact on human right violations than emails.

We think that most people will engage in sending a letter after the email to have more impact. They will have enough time despite the brevity of their current experience.

We chose to make it quick and simple:

- another call to action at the end of the mail process

- a form asking for more information (name, address)

- a mail containing the letter to print and sending instructions

We thought that the mail with further instructions was a good solution due to its asynchronous nature, compared to the instantaneous experience of the app. As printing, signing, and going to the post office to send the letter takes some time, we thought that it would make more sense if they could do it later, just by checking their mailbox. It took only a couple of days to implement it.

To test this, we preferred running a qualitative test. We really weren’t sure if what we built would appeal to our users, and we needed more insights. We invited a dozen people, split into 4 sessions of about an hour, and simulated a real experience.

As observers, we were right next to them to check both how they manipulated the app, and how they reacted to it. We needed to pay particular attention to a few things:

- what they read or skipped

- how long they stay on a page

- if and where they struggle

- when they make weird faces

Once the experimentation was over, we debriefed with our testers. First, we asked a few general questions to know if they understood what they did, and why they did it (Would they still do it if we weren’t in this context?). We then focused on our observations, to understand why they struggled or were intrigued at some point. In the end, we listened to them, taking feedbacks, and asking questions to understand the root causes of their feelings and actions.

The results were awful, again. Only one person would have sent the letter.

What went wrong?

- printing and going to the post office is cumbersome and time-consuming

- some people in our user group had never sent a letter and didn’t understand its value

- sending a mail and a letter seemed redundant

We needed to rethink the feature, and we quickly imagined that we could send the letter on their behalf. Amnesty isn’t staffed to send so many letters, but there are a few service providers able to do it.

Service providers come with a cost though, it means we would need to add a payment interface to the app.

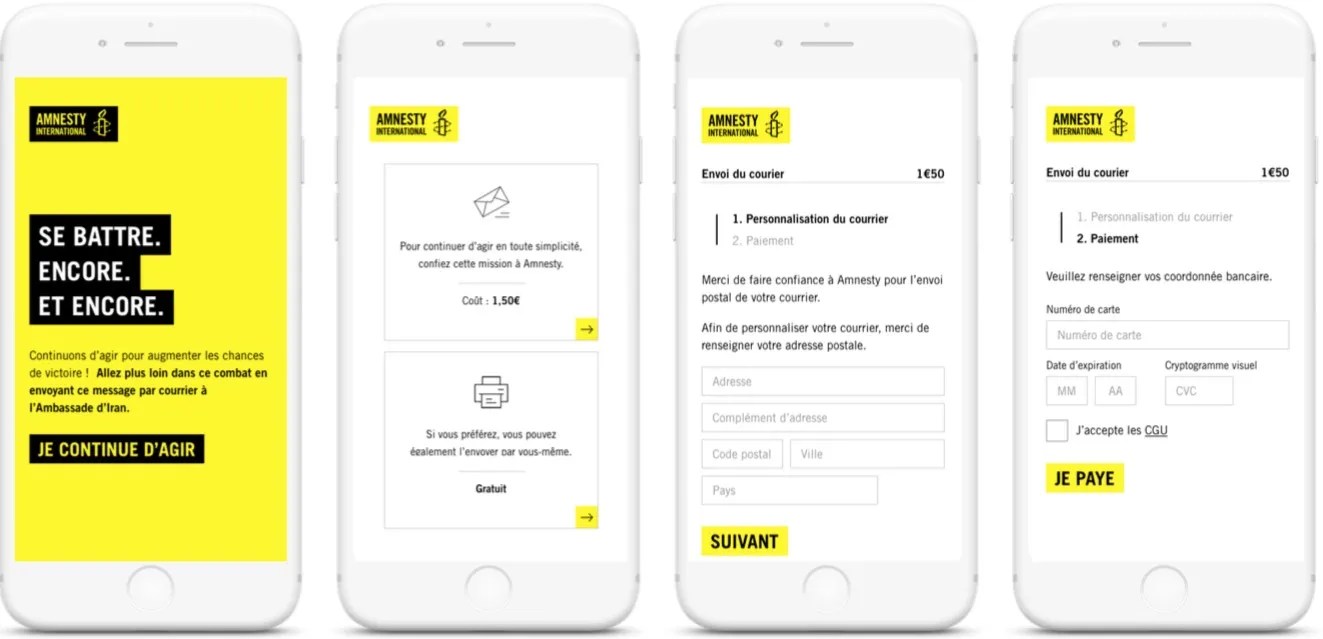

Will To Pay For Automated Sending

Here comes the next assumption.

Activists are willing to enter their card number on the app and pay about 1.5€ for the printing and the sending of the letter

Such a feature implies to connect to two third party services, one for the sending, another for the payment, a few more interfaces, and much more security. That’s a lot of development and too much of an investment for a simple test.

We did need to test this assumption, though. We decided to run another qualitative test, but on a prototype this time. With the huge help of Sébastien from Tribal, we built the few screens and their navigation on InVision. It only took a few hours and allowed us to start the test quickly. Testers could even use their own mobile phone!

With the same principles as the previous qualitative test, we gathered many insights:

- more people were willing to send the letter

- a significant amount of people thought 1.5€ was too much, even for an international letter

- the value of sending a letter still wasn’t well understood

We went forward, but we still needed to address the remaining problems.

Next thing we agreed to try:

- only propose to send letters at first

- amnesty pays for the sendings, until a certain budget

- once that budget is spent, only send emails

We hope it’ll make the application less confusing, by only proposing a single action and not two seemingly identical; and that it will encourage people to send more letters.

Conclusion

In the Lean Startup movement, people often say “Ideas are worthless, execution is everything”.

I believe that if we trusted our idea and went full throttle from the start, we would be done, with a full-blown product nobody uses. We didn’t quite reach that sweet spot where we found the market fit, yet. But we learned so much with such few investments that we feel very confident we’re going to reach it soon, by keeping on experimenting, learning, and tweaking our product iteratively.

Next step: actually releasing the product, and iterating on next hypotheses brought by user insights.

Authors

Facilitator at marmelab, Florian also gives lectures to IT students. He's a biker, plays the guitar, and brews his own beer.