AI Wars Ep. 3: The Return of the Developer

A Long Time Ago in an IT company Far, Far Away…

EPISODE III - THE RETURN OF THE DEVELOPER

It is a period of software development. AI-powered tools reign over software development. They are able to generate code faster than any developer ever could.

But now bugs and security issues are everywhere, and the system is on the brink of destruction.

Who can save the galaxy from this chaos?

Coding agents can generate code. We proved it in the previous episode of The AI Wars Trilogy (AI Strikes Back: Using an LLM to write COBOL). But we also saw that by default, AI assistants generate code that is not maintainable, and has security issues. So how can we use these tools without degrading the quality of their output? This is the subject of this article. Spoiler: you still need a developer to make good architectural decisions and review the code the AI generates.

You don’t need to know any COBOL to read this article. But be aware that you will see some.

COBOL-Admin: A Case Study

I already described COBOL-Admin, a COBOL program that generates admin interfaces by leveraging OpenAPI specifications, in the first episode (A new hope. Good bye React. Meet COBOL-Admin.). Now is the time to reveal the secrets of this adventure, and explain how I programmed this beautiful admin interface even though before this experiment, I had never written a line of COBOL code in my entire life.

Not knowing COBOL didn’t stop me. I believed that my experience as a developer, and my knowledge of software architecture, would be enough to guide a coding agent (in this case, Claude Code) to generate code that is maintainable and secure, even in a language I do not know.

The result, in all its glory, is visible on GitHub: marmelab/COBOL-admin, and you can try the live demo at cobol-admin.fly.dev.

Obviously, I would have been way more efficient using a language I knew beforehand, but where is the fun in that?

We Need a Plan

First, I needed a plan. I just had a vague idea of what I wanted to achieve.

I started by asking the coding agent what COBOL could do, and how I could use it to generate an admin interface. The agent explained to me that COBOL can do anything (the only limit is yourself). A web server? Easy:

HANDLE-REQUEST. CALL "cobol_cleanup_temp" END-CALL

MOVE LOW-VALUE TO REQUEST-BUFFER MOVE LOW-VALUE TO RESPONSE-BUFFER MOVE 0 TO RESPONSE-LEN

CALL "recv" USING BY VALUE CLIENT-SOCKET BY REFERENCE REQUEST-BUFFER BY VALUE 4096 BY VALUE 0 RETURNING BYTES-READ END-CALL

IF BYTES-READ <= 0 GOBACK END-IF

*> Parse request CALL "HTTP-PARSE" USING REQUEST-BUFFER WS-REQUEST-METHOD WS-REQUEST-PATH WS-PATH-LEN WS-REQUEST-BODY WS-BODY-LEN END-CALL

DISPLAY "Request #" WS-REQUEST-COUNT " " FUNCTION TRIM(WS-REQUEST-METHOD) " " FUNCTION TRIM(WS-REQUEST-PATH)

*> Route the request CALL "ROUTER" USING WS-REQUEST-PATH WS-PATH-LEN WS-ROUTE-TYPE WS-ROUTE-RESOURCE WS-RESOURCE-TABLE WS-PAGE WS-PER-PAGE WS-ROUTE-ID WS-STATIC-PATH END-CALLSo I knew I could target an ambitious goal. That’s how I came up with the plan to leverage the OpenAPI specification to generate an admin interface. The server fetches the OpenAPI documentation of a REST server used as parameter, then deduces the resources it has to map, and generates HTML on-the-fly for the requested CRUD route.

I didn’t use Claude Code’s plan mode. Instead, I dove head first into COBOL.

Clumsy By Default

I started with the basics: generating and serving an HTML page saying “Hello World” in COBOL.

Claude Code managed to build a web server in COBOL in a few seconds. But already at this early stage, I saw several issues:

- The AI generates everything into a single file.

- The AI creates HTML by concatenating strings in COBOL code, which is very error-prone and hard to maintain.

- The AI adds CSS in style props, which is hard to maintain.

- The code needs me to install COBOL on my machine. I told it to use Docker instead.

Maintainability matters even when the AI writes the code. The AI reads the code too. Messy code produces worse suggestions.

So I instructed Claude Code to fix these issues. It did. But as I forgot to put these instructions into Claude’s memory, it kept producing messy code, as if it were its nature.

No Hands

Then I asked the AI to fetch the OpenAPI specification from a given URL, and to extract the list of endpoints from it.

My early tests of this program proved tedious. Each time Claude finished a task, I found a small bug and I had to copy/paste the error to ask for a fix. It felt just like dealing with a robot with no hands.

As any normal developer would do, I ended up asking Claude to add automated tests for this code. That’s how it managed to finish the task without (ab)using me as a tester.

What do tests look like in COBOL? I’m glad you asked:

*> Tests for HTTP-PARSE moduleidentification division.program-id. test-http-parse.

data division.working-storage section.01 ws-request-buffer pic x(4096).01 ws-request-method pic x(10).01 ws-request-path pic x(512).01 ws-path-len pic 9(4) comp-5.01 ws-request-body pic x(4096).01 ws-body-len pic 9(4) comp-5.01 ws-expected-len pic 9(4) comp-5.

procedure division.

perform test-simple-get. perform test-root-path. perform test-list-path. perform test-post-method. goback.

test-simple-get section. move spaces to ws-request-buffer move "GET /hello HTTP/1.1" to ws-request-buffer call "HTTP-PARSE" using ws-request-buffer ws-request-method ws-request-path ws-path-len ws-request-body ws-body-len end-call call "assert-equals" using "GET", ws-request-method(1:3). call "assert-equals" using "/hello", ws-request-path(1:6). move 6 to ws-expected-len call "assert-equals" using ws-expected-len, ws-path-len.

test-root-path section. move spaces to ws-request-buffer move "GET / HTTP/1.1" to ws-request-buffer call "HTTP-PARSE" using ws-request-buffer ws-request-method ws-request-path ws-path-len ws-request-body ws-body-len end-call call "assert-equals" using "/", ws-request-path(1:1). move 1 to ws-expected-len call "assert-equals" using ws-expected-len, ws-path-len.

test-list-path section. move spaces to ws-request-buffer move "GET /list/authors HTTP/1.1" to ws-request-buffer call "HTTP-PARSE" using ws-request-buffer ws-request-method ws-request-path ws-path-len ws-request-body ws-body-len end-call call "assert-equals" using "/list/authors", ws-request-path(1:13). move 13 to ws-expected-len call "assert-equals" using ws-expected-len, ws-path-len.

test-post-method section. move spaces to ws-request-buffer move "POST /edit/authors/1 HTTP/1.1" to ws-request-buffer call "HTTP-PARSE" using ws-request-buffer ws-request-method ws-request-path ws-path-len ws-request-body ws-body-len end-call call "assert-equals" using "POST", ws-request-method(1:4). call "assert-equals" using "/edit/authors/1", ws-request-path(1:15).

end program test-http-parse.Lovely.

Automated tests are crucial: they allow coding assistants to check that the code works directly, and to iterate on their own in case of error. If only coding assistants knew they needed tests in the first place…

Sometimes Awesome

Then I asked Claude to generate a menu listing linking to the different resources, and to generate a placeholder page for each resource. Surprisingly, it did add the new code in separate files, and it even added some tests for the new code.

Then I asked it to generate a page listing the record of a resource, by fetching the data from the API. Again, the AI generated code that works, in separate files, and with tests.

I thought that troubles were behind me. That’s one of the main problems with coding agents: they gain our trust by being really spectacular at times. So we lower our guard. Until, eventually, they fail us.

Split Personality

By then, “I” had already produced a lot of code. I decided to step back and check at the result. As I don’t know COBOL, I naturally asked Claude to review what we had built so far and propose improvements. After all, it knows COBOL better than I do.

I expected Claude to find code duplication issues, to detect missing tests, or incomplete error handling. It did. But it also discovered a few critical security issues. I quote:

- Add path validation to serve-static.cbl (prevent path traversal like

../../etc/passwd)- Add HTML escaping for all values injected into HTML (XSS risk in page-list, page-show, page-edit)

- Sanitize resource names and field values before interpolating into

CALL "SYSTEM"shell commands (command injection)

These are big security issues. How come it introduced them in the first place? The reviewer is the exact same coding agent, and it’s capable of detecting security issues. That doesn’t make sense. Are there two faces to this Claude?

I looked at the third vulnerability a bit longer. CALL SYSTEM? But why on earth would a web server need to CALL SYSTEM? As a developer, I know that this type of command is radioactive, and I avoid them at all costs. If I have to use them, I think twice about the security risks.

And then I saw 2 other issues that the AI considered medium:

- Add error handling for curl/jq failures — show user-friendly error when API is down or jq fails

- Clean up temp files after use — 9 hardcoded

/tmp/files; concurrent requests cause race conditions

And so I understood what was going on.

The AI used CALL "SYSTEM" to run curl and jq as shell commands. It fetched data from the API and parsed JSON through the shell, with unsanitized input, on my machine. That’s when I felt glad about dockerizing the entire thing.

The program also stored command output in /tmp/ files with hardcoded names. If two users visited the admin at the same time, which happens in any real environment, one user would get the other’s data.

In my career, I have taken shortcuts many times, and especially during hack days. But I always weighed the trade-offs. The AI just ran into the shortcut without any consideration for the risks, and without warning me about it.

How could I trust it after that?

Trust Only In The Force

I still wanted to believe, so I asked Claude to fix these issues. For the first two issues, it managed to remove the vulnerability. But the fixes it proposed for the CALL SYSTEM were wrong:

- sanitize the input before passing it to

SYSTEM.- clean up the temp files after use.

These do not fix the root cause, which is CALL "SYSTEM" itself. Every other issue is a consequence of that. Claude wanted to treat the symptoms, not the cause. Finding the root cause is normally what every developer does when investigating a bug. But apparently, coding agents are satisfied with hiding the bug.

So I explicitly asked it to replace curl and jq with a proper COBOL library. Problem: There are no such libraries. There is no COBOL NPM. But there is hope, as you can call C libraries directly from COBOL, without going through the shell.

I came up with that plan: hand the data fetching and JSON parsing off to a C library. Once I explained it to Claude, it proceeded with enthusiasm, and fixed the CALL "SYSTEM" and temporary file issues. It did admit that it was a way better fix that addressed the root cause. Indeed. A shame it didn’t figure it out by itself.

I’ve Got a Bad Feeling About This

So I resumed development, more careful than before. I asked Claude to implement the show and edit pages for each resource. I asked it to add reference fields (fields that link to other resources) and make them clickable.

How do you infer from an OpenAPI specification that a field references another resource? There is no standard way.

Claude proposed a custom OpenAPI extension. That’s clever, except it forces users to modify their spec. That defeats the purpose of an admin that works over any OpenAPI API. Is this really a good idea?

This felt off. I knew there were no perfect solution, but this one seemed to offload a big burden on other programs. There had to be a better way. I don’t know how intuition works, but I’m sure that coding agents are devoid of it.

So I asked it to infer references from field names and endpoints instead (e.g., if a post has an author_id and there is an author resource, this probably means a many-to-one relationship between posts and authors). It is not perfect, as irregular pluralization breaks it. It assumes id as the primary key and an Id suffix for foreign keys.

I accepted that trade-off as it works in most cases and could be improved later. Claude naturally made another tradeoff. These are the important decisions that should be left to humans, as LLMs aren’t yet smart enough to carefully weigh the pros and cons, especially given their limited context.

Bad Taste

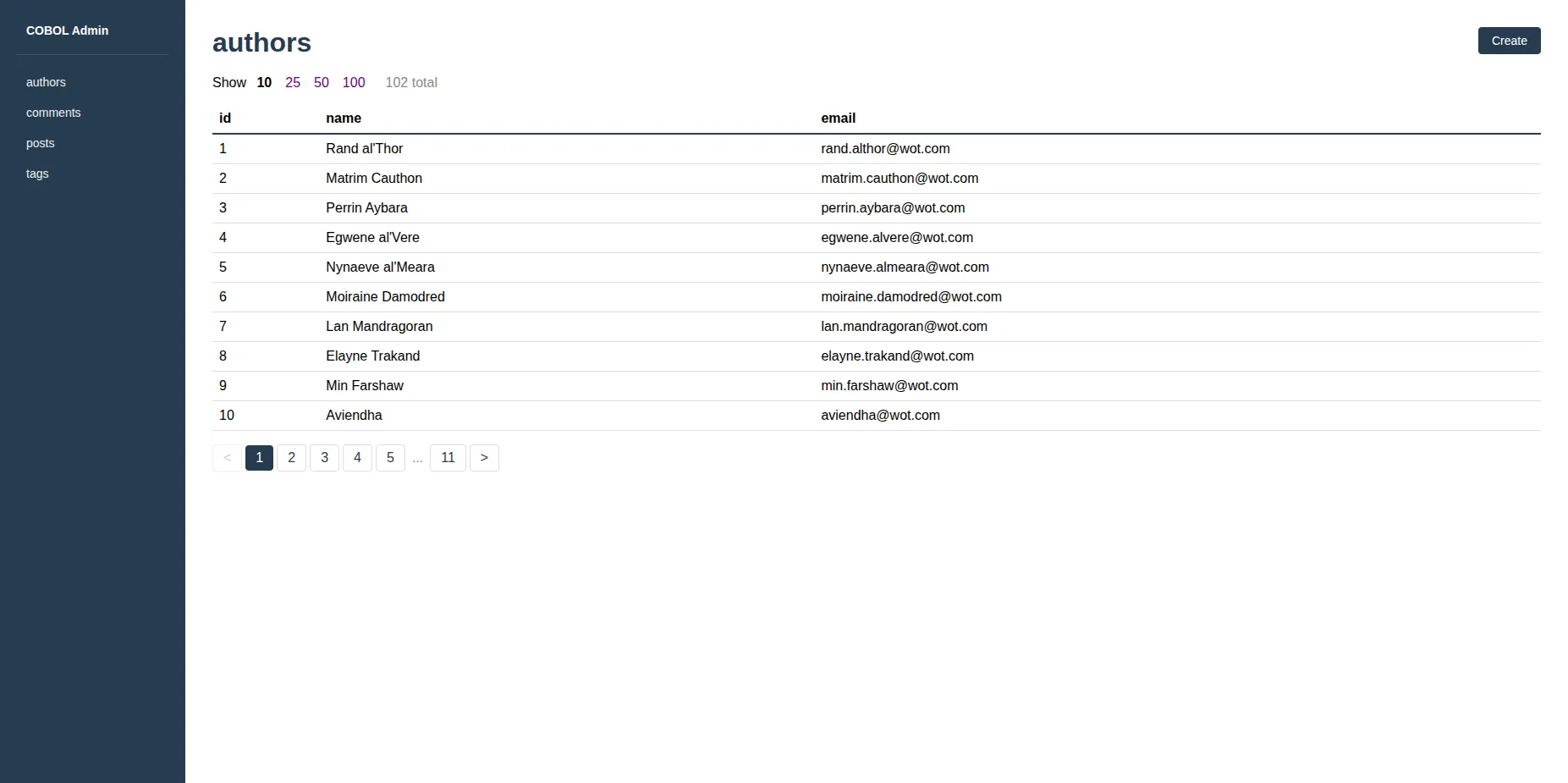

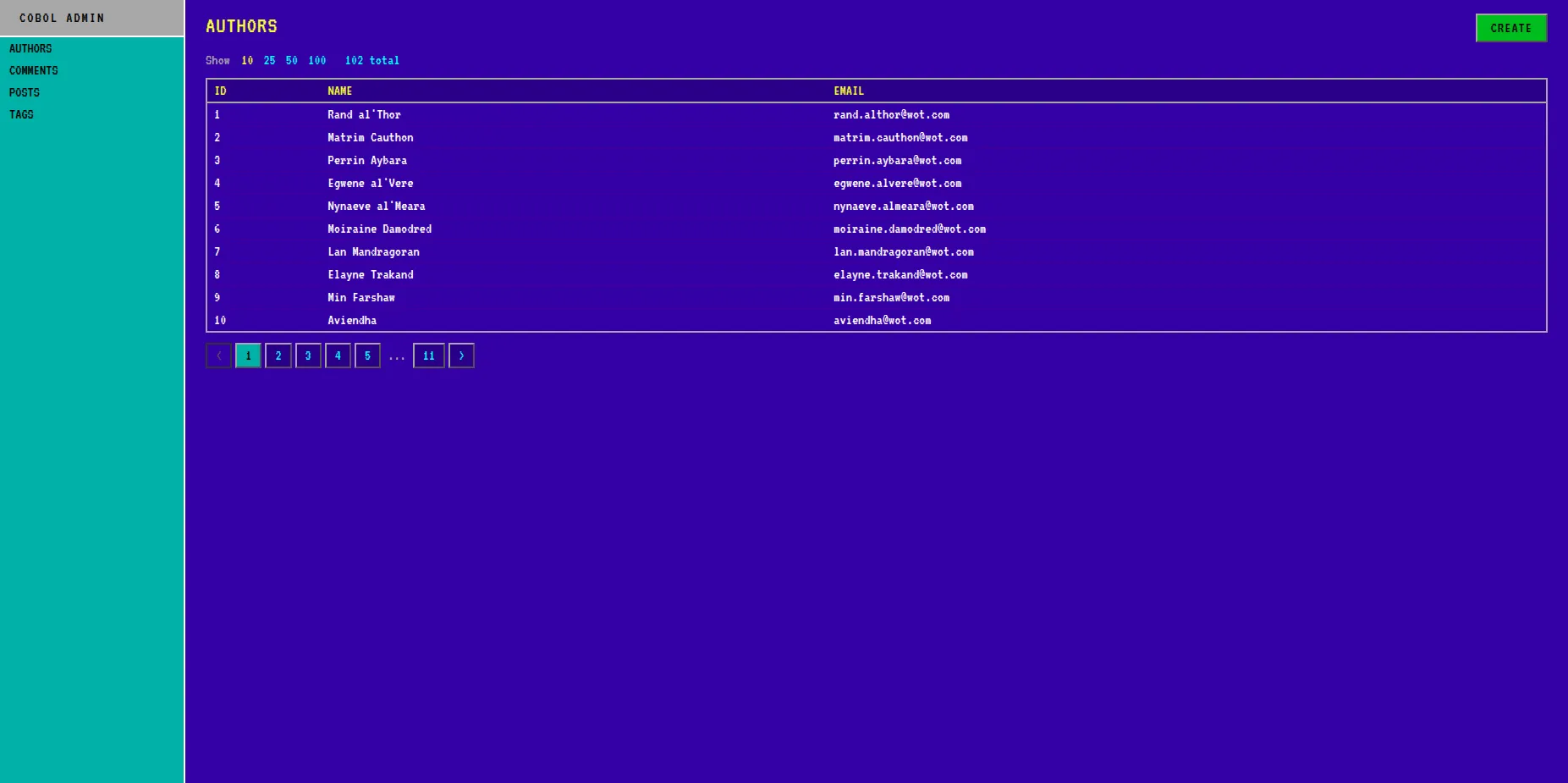

After about a day of discussion with Claude, I managed to get a working COBOL admin. Except the interface looked like a classic (dare I say mainstream?) admin interface from the 2020s:

In fact, all the UIs that I have seen Claude build look the same. Neutral, bland, boring. This one did not feel at all like a proper COBOL application. A COBOL application is blue. It uses fixed fonts. It’s retro.

Fortunately, someone else at Marmelab already explored this territory in the past: Building A Retro React-Admin Theme For Fun And Profit. So I told Claude to read it and asked it to apply the same theme.

It worked:

Much better. Claude did it on the first try, with only a minor issue on some input fields. Funnily enough, it took more time to fix this issue than to apply the theme in the first place.

Conclusion

AI tools are powerful, and they can generate code faster than any developer ever could. But they are not perfect, and they can introduce bugs and security issues in the code they generate. To make the most of these tools, you still need a developer to:

- make architectural decisions

- detect when AI goes off rails

- determine the good practices to use

- find and fix the issues created by AI

- be responsible for the result

This is the Force of the developers. We’ve gathered it through midi-chlorians years of training and practice. And no AI can wield it for us.

Authors

Full-stack web developer at marmelab, loves functional programming and JavaScript.